Most businesses think about Google in terms of rankings, traffic, and leads. But before a page can rank, Google has to find it. That job starts with Googlebot.

Googlebot is Google’s web-crawling system. It discovers pages, revisits existing ones, and helps Google understand what exists across the web. If a business wants stronger organic visibility, it needs more than good content and keywords. It also needs a site that Googlebot can access, crawl, and interpret efficiently.

For organizations investing in digital growth, whether through SEO, website development, or broader digital transformation, understanding Googlebot is foundational. It explains why some pages get indexed quickly, why others seem invisible, and why technical SEO matters just as much as on-page optimization.

Key Takeaways

- Googlebot is Google’s web crawler, and it must discover, access, and interpret your pages before they can be indexed or rank in search.

- Strong SEO depends on crawlability, so clean site architecture, clear internal linking, and XML sitemaps help Googlebot find important content faster.

- Crawling, indexing, and ranking are different stages, which means a page can be crawled by Googlebot and still fail to appear in search results.

- Technical issues like blocked resources, weak JavaScript rendering, duplicate URLs, and server errors can limit how efficiently Googlebot crawls your site.

- Large or complex websites need active crawl management to avoid wasting crawl budget on thin, duplicate, or parameter-based pages.

- Crawlers and fetchers serve different roles, so understanding which Google system is requesting your content helps teams diagnose SEO and access problems more accurately.

What is a web crawler?

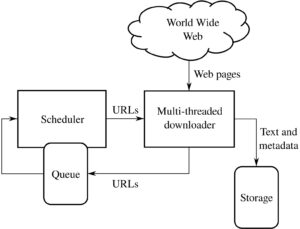

A web crawler is an automated program that moves from page to page across the internet, collecting information about websites. It follows links, reads page signals, and sends data back to a search engine so those pages can potentially be indexed and shown in search results.

In simple terms, a crawler is like a very fast researcher. It visits a webpage, looks at what is there, notes links to other pages, and keeps going. Search engines rely on crawlers because the web is far too large to review manually.

Googlebot is Google’s crawler, but it is not the only one in existence. Bing has its own crawler. SEO tools have crawlers. Some platforms use crawlers for monitoring, auditing, or aggregating content. What makes Googlebot especially important is the scale of Google Search and its influence on online discoverability.

A crawler typically performs a few core tasks:

- Discovers URLs through links, XML sitemaps, redirects, and other signals

- Requests pages from servers

- Reads content and technical elements such as HTML, metadata, canonicals, and structured data

- Finds additional links to continue crawling

- Passes information to indexing systems that determine whether and how a page should appear in search

This does not mean every crawled page gets indexed. Crawling is the discovery and access stage. Indexing is the storage and evaluation stage. Ranking comes later.

That distinction matters for businesses. A page can exist on a site and still fail to generate organic traffic because Googlebot cannot reach it properly, because the page is blocked, or because Google decides it is not useful enough to index.

Modern crawlers also deal with more than static HTML. Many websites rely on JavaScript, dynamic content, faceted navigation, and API-driven interfaces. That complexity can create friction. A page may look fine to a human visitor but present challenges to a crawler if critical content loads in ways that are difficult to process.

This is one reason technical SEO continues to matter. Strong design alone is not enough. The site has to communicate clearly with search engines. For businesses pursuing long-term online growth, that often means aligning development, content, and SEO instead of treating them as separate projects.

How Googlebot visits your site

Googlebot does not browse a website the way a person does. It starts with a list of known URLs, often gathered from previous crawls, links from other sites, XML sitemaps, and feeds submitted through Google Search Console. From there, it decides what to fetch, how often to return, and how much crawling a site can reasonably support.

At a high level, the process looks like this:

- Discovery: Google finds a URL through internal links, backlinks, sitemaps, redirects, or prior knowledge.

- Crawling: Googlebot requests the page from the server.

- Rendering: If needed, Google processes JavaScript to see content generated after the initial load.

- Evaluation: Google assesses page signals, content quality, duplication, canonicals, and crawl directives.

- Link extraction: Googlebot identifies more URLs to add to its crawl queue.

Several factors influence how effectively Googlebot visits a site.

Crawl budget and crawl demand

For larger websites especially, Google considers something often called crawl budget. This is not a fixed score shown in a dashboard, but rather a practical combination of two things: how much crawling a server can handle and how much Google wants to crawl the site.

If a site is fast, healthy, and frequently updated, Google may crawl it more actively. If the site returns repeated errors, responds slowly, or contains many low-value URLs, crawling may become less efficient.

For enterprise sites, eCommerce catalogs, publishers, and businesses with large service or location page sets, crawl management becomes a real SEO issue. Thousands of parameter-based URLs or thin duplicates can waste Googlebot’s attention.

Internal linking shapes crawler behavior

Googlebot heavily relies on links. Strong internal linking helps it discover important pages and understand which content matters. When key pages are buried deep in the site or orphaned entirely, Googlebot may take longer to find them, or miss them.

This is why site architecture is not just a UX decision. It is also a crawlability decision. Clean navigation, contextual links, and logical hierarchy help Googlebot move through a site with less friction.

Robots directives and access controls

Site owners can influence crawling through robots.txt, meta robots tags, canonical tags, and server-side directives. These signals do different jobs, and confusing them can cause problems.

- robots.txt can discourage or block crawling of certain paths

- noindex tells search engines not to index a page

- canonical tags indicate the preferred version of similar pages

- password protection or login walls can prevent access entirely

A common business mistake is assuming blocked pages will still be fully understood by Google. If crawling is blocked, Googlebot cannot see the page content in the normal way. That limits how well Google can evaluate it.

Rendering and JavaScript

Googlebot can render many JavaScript-powered pages, but that does not mean every implementation is equally SEO-friendly. Heavy client-side rendering, delayed content injection, or poorly handled scripts can reduce visibility.

This is where development decisions directly affect search performance. Businesses working on redesigns, app-like web experiences, or custom platforms often benefit from involving technical SEO early. A digital partner such as AGR Technology would usually look at crawlability, rendering behavior, and indexation risk alongside design and functionality, not after launch, when fixing issues is more expensive.

Server responses matter

Googlebot pays attention to HTTP status codes. A 200 means the page is available. A 301 signals a permanent redirect. A 404 shows the page is missing. Repeated 5xx server errors can slow or disrupt crawling.

If a website frequently times out or returns unstable responses, Googlebot may reduce crawl activity to avoid overloading the server. That is one more reason why technical performance underpins SEO.

In practice, businesses that want Googlebot to visit their sites efficiently should focus on a few basics: maintain a clean architecture, submit XML sitemaps, improve internal linking, reduce low-value URL clutter, and monitor crawl issues in Search Console.

Why Is Googlebot Important for SEO?

Googlebot matters for SEO because it is the entry point to search visibility. If Google cannot reliably crawl a page, that page has little chance of being indexed and ranked well.

That sounds obvious, but it is often overlooked. Many businesses focus heavily on keyword targeting, content production, and backlinks while ignoring whether Googlebot can access their pages efficiently. It is a bit like printing a great brochure and leaving it in a locked room.

Here is why Googlebot has such a direct impact on SEO performance.

It controls discovery

New pages do not help a business if search engines do not find them. Whether it is a new service page, product page, blog article, or case study, Googlebot usually needs a clear path to discover it.

This is especially relevant for growing businesses with frequent website updates. If content is published without internal links, without sitemap inclusion, or on a technically weak platform, discovery can lag.

It influences indexing

Googlebot’s visit is part of the process that leads to indexing. During and after crawling, Google evaluates content uniqueness, usefulness, duplication, and technical signals. Not every page deserves a spot in the index.

Pages with thin content, duplicate copy, broken canonicals, or mixed signals may be crawled but not indexed. For SEO teams, that distinction is critical. “Crawled” is not the same as “eligible to rank.”

It helps Google understand site quality and structure

Googlebot does not judge a site the way a human does, but the crawl path reveals a lot. Clear hierarchy, consistent internal linking, working canonicals, and strong technical health make it easier for Google to interpret a site.

Messy architecture sends the opposite message. Duplicate sections, endless filters, redirect chains, and inaccessible key pages create noise. That noise can dilute SEO performance, especially on larger sites.

It affects speed to visibility

When Googlebot regularly visits a site and finds meaningful updates, new or revised pages can appear in search faster. For businesses publishing time-sensitive content, launching offers, or expanding into new markets, this matters.

Fast indexing is not guaranteed, but crawl efficiency improves the odds.

It reveals technical SEO problems

Watching how Googlebot interacts with a site often uncovers bigger issues:

- Important pages blocked in

robots.txt - Duplicate parameter URLs consuming crawl resources

- Broken internal links

- Soft 404 pages

- Redirect loops

- JavaScript content that fails to render properly

- Slow server response times

In other words, Googlebot is not just a visitor. It is also a diagnostic lens. If the crawler struggles, users may eventually struggle too.

It matters even more for large or complex sites

Small brochure-style websites usually have fewer crawl challenges. But as a business scales, adding products, locations, service categories, knowledge hubs, support docs, or multilingual sections, crawl management becomes more strategic.

That is where SEO overlaps with web development, content governance, and platform architecture. Businesses investing in digital transformation often discover that search visibility is not just a marketing output. It is the result of coordinated technical decisions.

And that is the practical takeaway: Googlebot is important because SEO begins before rankings. It begins with access, discovery, and clarity.

Crawlers versus fetchers: a distinction that matters

People often use the terms crawler and fetcher as if they mean the same thing. They are related, but not identical.

A crawler is designed to systematically discover and revisit URLs across the web. It follows links, builds queues, and helps search engines map content at scale. Googlebot, in the broad sense, is a crawling system.

A fetcher, by contrast, usually retrieves a specific URL or resource on demand. It is less about broad exploration and more about targeted access.

Why does this distinction matter? Because different Google systems may request content for different reasons.

For example, a crawler may discover and analyze pages for Search indexing, while another system may fetch a page to test rendering, verify structured data, check ad quality, or generate previews. Google also uses specialized user agents for certain products and functions.

From a business and SEO standpoint, this matters in a few ways.

Log files tell a more nuanced story

When technical teams review server logs, they may see different Google user agents. Not all of them behave the same way or serve the same purpose. Understanding whether a request came from a crawler, a mobile crawler, a rendering-related system, or another fetcher helps teams diagnose issues more accurately.

A spike in requests does not always mean broad crawling activity. It may reflect a narrower retrieval purpose.

Blocking the wrong thing can create unintended problems

Some site owners attempt to restrict bots without fully understanding what each agent does. Blocking a crawler may affect discovery. Blocking a fetcher tied to a specific Google feature may affect how content is processed elsewhere.

That does not mean every Google user agent should be allowed automatically, but rules should be intentional. Blanket bot blocking can create SEO and visibility problems that are surprisingly hard to trace.

Testing tools are not the same as full crawling behavior

When someone uses a “URL inspection” or “live test” feature, that often triggers a fetch of a specific page. It does not necessarily represent how Googlebot crawls the entire site at scale.

This is an important nuance. A page may fetch correctly in a test and still have broader crawl issues because of weak internal linking, poor canonicalization, or low crawl priority.

Better decisions come from technical precision

For businesses managing high-value websites, precision matters. Teams making decisions about robots rules, CDN settings, firewalls, rendering, and infrastructure need to know whether they are dealing with general crawling or targeted fetching.

That distinction becomes even more useful during migrations, redesigns, large content rollouts, or custom application development. It helps separate indexing problems from access problems and spot where the breakdown actually occurs.

In the end, the difference is simple: crawlers explore the web systematically, while fetchers retrieve specific content for defined purposes. But in SEO, simple distinctions often have outsized consequences. Understanding both helps businesses build sites that are easier for Google to access, interpret, and trust.

Frequently Asked Questions About Googlebot

What is Googlebot, and why is Googlebot important for SEO?

Googlebot is Google’s web-crawling system that discovers pages, revisits content, and sends information to Google’s indexing systems. Googlebot is important for SEO because if it cannot access, crawl, and interpret a page properly, that page is far less likely to be indexed or rank in search results.

How does Googlebot find and crawl pages on a website?

Googlebot typically discovers URLs through internal links, backlinks, XML sitemaps, redirects, and previous crawls. It then requests the page, may render JavaScript if needed, evaluates key signals like canonicals and metadata, and extracts more links to continue crawling the site.

Why does Googlebot crawl some pages but not index them?

Crawling and indexing are not the same. Googlebot may crawl a page, but Google can still decide not to index it if the content is thin, duplicated, blocked by directives, or not considered useful enough. Technical issues and mixed signals can also reduce index eligibility.

What helps Googlebot crawl a site more efficiently?

A clean site architecture, strong internal linking, XML sitemaps, fast server response times, and fewer low-value or duplicate URLs all help Googlebot crawl more efficiently. Managing robots directives carefully and reducing JavaScript-related rendering issues can also improve crawlability and indexation.

Can JavaScript prevent Googlebot from seeing my content?

Yes, it can. Googlebot can render many JavaScript-powered pages, but heavy client-side rendering, delayed content loading, or poorly implemented scripts may make important content harder to process. If critical information depends on JavaScript, technical SEO testing is essential to confirm Googlebot can access it.

What is the difference between a crawler and a fetcher in Google systems?

A crawler systematically discovers and revisits URLs across the web, while a fetcher retrieves a specific page or resource for a defined purpose. This matters because different Google user agents may request content for indexing, testing, rendering, previews, or other functions, and they do not all behave the same way.

Credits:

Vector version by dnet based on image by User:ChaTo, CC BY-SA 3.0, via Wikimedia Commons

Alessio Rigoli is the founder of AGR Technology and got his start working in the IT space originally in Education and then in the private sector helping businesses in various industries. Alessio maintains the blog and is interested in a number of different topics emerging and current such as Digital marketing, Software development, Cryptocurrency/Blockchain, Cyber security, Linux and more.

Alessio Rigoli, AGR Technology

![logo-new-23[1] logo-new-23[1]](https://agrtech.com.au/wp-content/uploads/elementor/thumbs/logo-new-231-qad2sqbr9f0wlvza81xod18hkirbk9apc0elfhpco4.png)